How Granola thinks about designing agents

Toby

Robert

Xiuting

May 6

In late April we launched the new Granola Chat. It's now smarter and much more capable, and rebuilt from the ground up to be agentic.

It can now work across all your notes and transcripts over months and years, whether you're looking for a quick answer or asking something more open-ended.

We're excited about what this unlocks, but it also surfaces a deeper question: how should an agent actually work with this kind of context?

A concrete tradeoff

When rebuilding Granola Chat, we had a choice: should the agent read 10 meetings in detail, or 100 at a high level?

Transcripts are the most vivid reenactment of what happened in the meeting, but they are messy. More importantly, they are also roughly 10x the number of tokens compared to meeting notes.

We chose 100.

Granola has always been about capturing context from conversations. The next challenge is making that context useful. That is ultimately an agent design problem.

Designing agents for individuals vs at scale

It has never been easier to build an agent for yourself.

When it is your own setup, you can give it access to everything, tune it around your workflows, and tolerate its quirks. You know what you meant when you asked a vague question. You know where the relevant information probably lives. If it is slow, brittle or occasionally strange, you can usually work around it.

Designing an agent that works well for hundreds of thousands of users is a different problem entirely.

You cannot assume clean inputs. You cannot assume users know the best way to phrase a request. You cannot assume infinite cost or latency. And you cannot rely on each user patiently adapting to the system's weaknesses.

Most importantly, you cannot afford to break trust.

What makes an agent "good"

A personal agent can rely on the user to compensate for its flaws. A product agent cannot.

Chris, one of our founders, often frames agent quality internally in terms of failure modes rather than raw intelligence. We tend to think about outputs across two dimensions: was it useful, and was it honest about its limits?

We think about agent outputs in four categories:

- Useful and comprehensive

- Useful but incomplete

- Incomplete, and clearly so

- Wrong, but confident

The first is ideal. The second is often acceptable. The third can still be useful because the user understands the limit. The fourth is the one to avoid.

An answer that sounds certain while being wrong can break trust quickly, and trust is much harder to rebuild than it is to lose. For us, a good agent is not just one that helps when things go right. It is one that behaves well when things go wrong.

That framing shapes many of the tradeoffs we made when building Agentic Chat.

Key tradeoffs

Most agent design tradeoffs are not good vs bad. More often, it is choosing between two good things you cannot fully have at the same time.

Breadth over fidelity

There is a tradeoff between giving agents more detail vs more coverage. Transcripts contain the highest fidelity version of a conversation. Every sentence, interruption and aside is there. In some cases, that level of detail is useful. They are also far more expensive to process. A transcript can be roughly 10x the number of tokens compared to structured meeting notes.

That creates a practical choice. Should the agent read 10 meetings in full detail, or 100 meetings at a higher level?

We often think the second option is more useful. Many workplace questions are not solved by perfect recall of one conversation. They are solved by understanding patterns across many conversations.

So in many cases, we prioritize seeing more of the system over zooming in on a single part.

Search over completeness

The ideal answer to many queries would be simple: read everything, then respond.

In practice, that does not scale well on cost, latency or reliability.

Instead, we think about retrieval more like navigation. Start broad, identify where the relevant information is likely to live, inspect the most promising areas, then go deeper only when needed.

Internally, that often looks like: Search → shortlist → read → repeat

The agent behaves less like a system brute-forcing context, and more like a person trying to find the right information efficiently. That means it can spend depth where depth matters, rather than everywhere by default.

Transparency over confidence

Another common temptation in AI products is to smooth over uncertainty. They want to return a clean answer, and hide the messy process underneath. They present confidence, even when confidence is not warranted. We think that breaks trust quickly.

Agents do not know what they do not know. They can make assumptions too early, stop searching too soon, or infer certainty from partial evidence. So, rather than hiding those limits, we bias toward making them visible.

That might mean showing what sources were used, how much context was reviewed, or where the answer may be incomplete.

A less polished answer that is honest about its limits is usually more useful than a polished answer that overstates what it knows. Good agents are defined less by what they know, and more by how they handle what they do not know. We would rather be clear about limits than pretend they do not exist.

Showing the work

One distinctive part of the new Chat is Coverage Notes. We think this is a pretty unique agentic feature (and one we would love to see more of elsewhere).

When writing our internal #shipped post, we wanted to make sure we thanked everyone who helped with the launch. Granola is a growing company now, so we can no longer just look around and see who is on the floor around us.

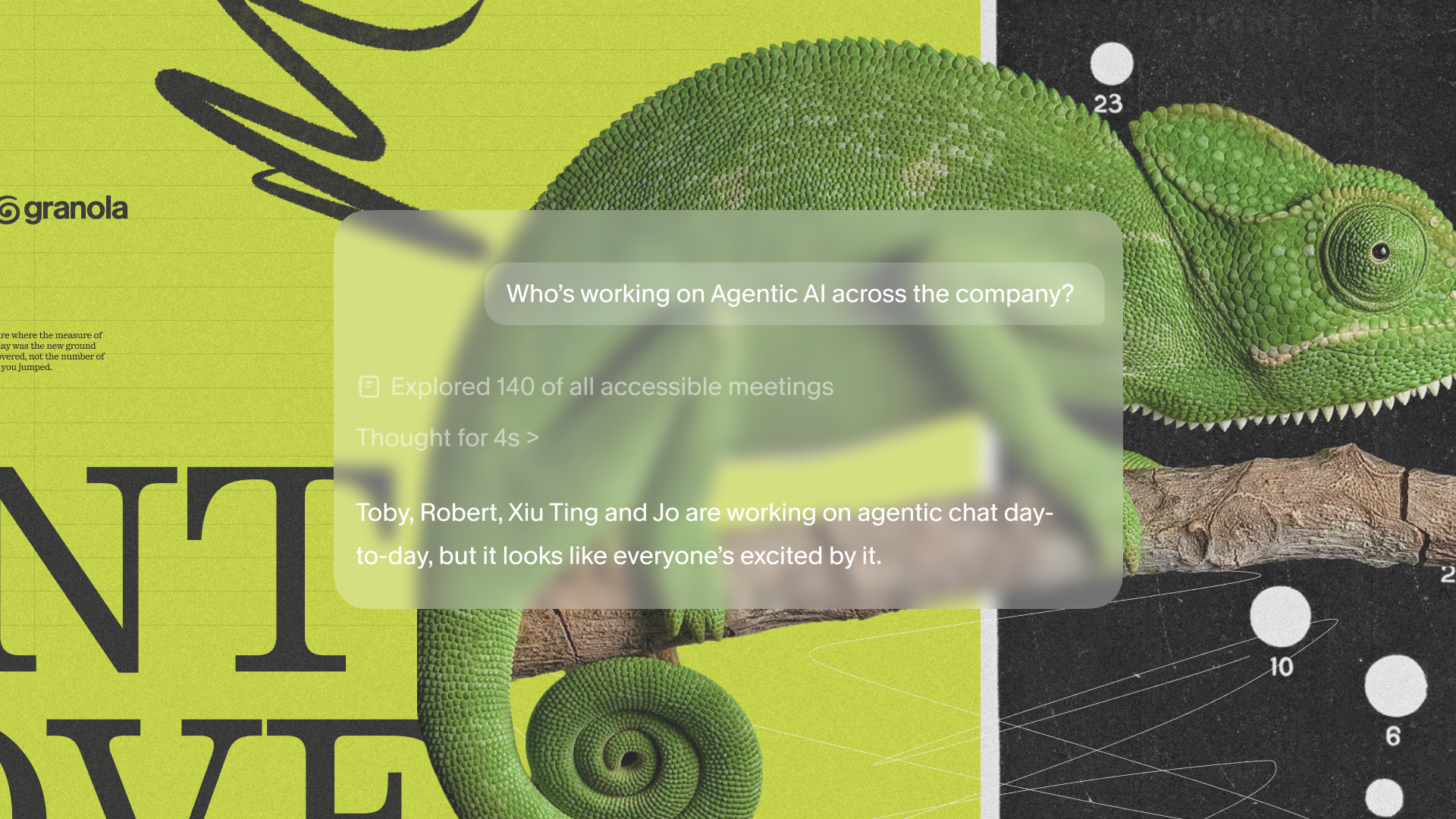

It is a company of over 70 people now, who are Granola-ing everything, all the time, so this is also a good use case for the power of Chat. When we ran this query, Chat was thinking for 38 seconds. That is a lot.

When asked a question like this, instead of returning a single confident answer, Chat shows what it looked at:

This empowers the user to make an informed decision to (i) accept the agent's recommendation, (ii) request the agent do more work, or (iii) click through the resources themselves.

This is a direct response to the failure mode in the taxonomy above. We believe that useful but incomplete is acceptable (for now), confident and wrong is not.

In our testing, we have also noticed that it changes the user behavior: people click into sources, follow threads, and build their own understanding. That is something we really care about.

Chat: under the hood

On the surface, Chat looks familiar. Under the hood, it behaves very differently. It can go further back in time, it can pull from a wider set of context, and it can handle queries that previously broke.

As an example, a salesperson asking "what's the state of this customer?" now spans months or even years of conversations across the whole company, not just recent meetings in a folder.

Capturing context was the first step. Working intelligently with that context is the harder one. Solving that means making careful decisions about how an agent should behave. Agentic Chat is one step in that direction.

Toby, AI Engineer

Robert, AI Engineer

Xiuting, Product Manager